We’ve covered what Coding Agents are, how they work, and running them safely. Now: what do we do about Coding Agents?

This isn’t hypothetical. Your developers are already using ChatGPT, Copilot, and autonomous agents like Pi and Claude Code. The question isn’t whether they’ll enter your organization — they already have. It’s whether you’ll shape that adoption or be shaped by it.

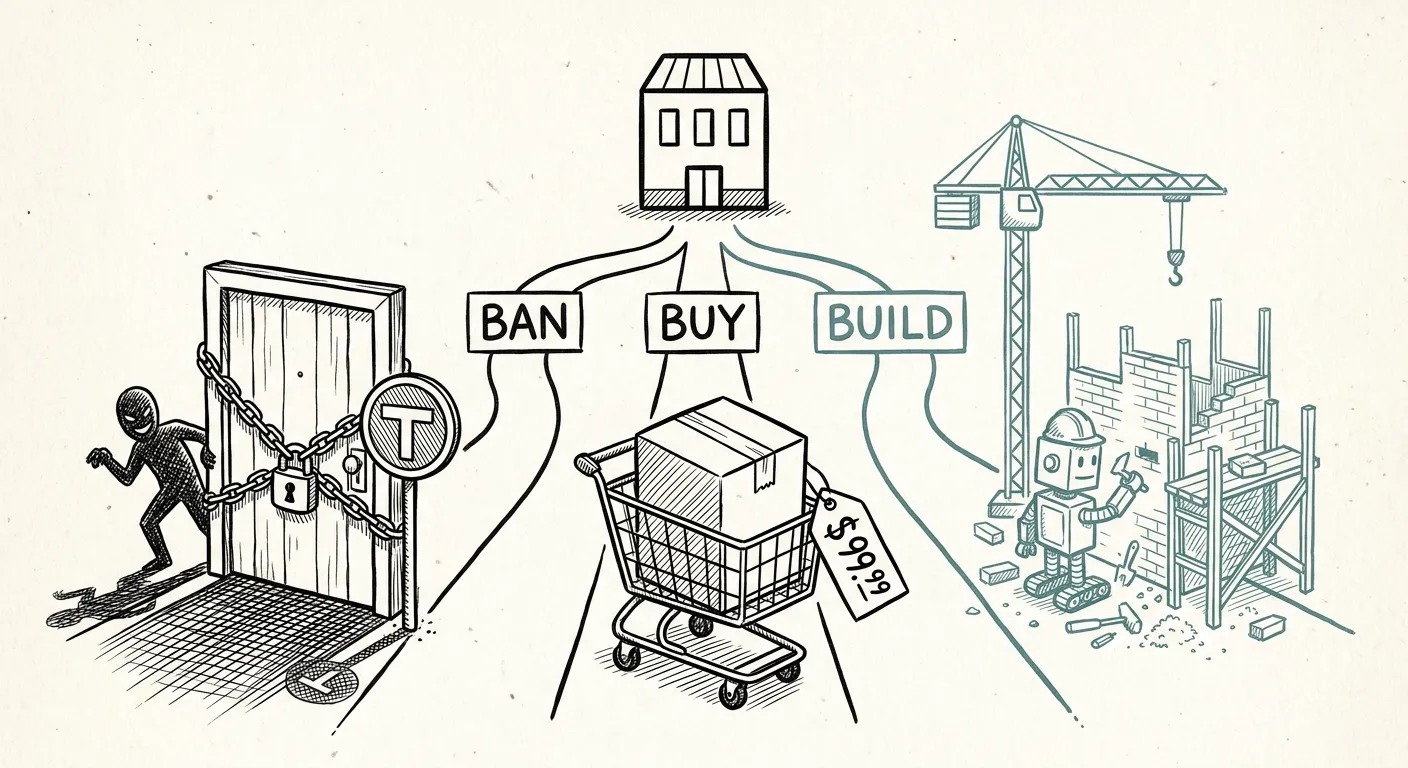

The Three Strategic Options

Every enterprise faces three fundamental paths when confronted with a disruptive technology. For Coding Agents, they map cleanly as follows:

Option 1: Ban 🚫

The most conservative response: forbid the use of Coding Agents entirely. No Pi, no Claude Code, no Copilot Agents. Developers may use chat-based LLMs in read-only mode (if that), but no autonomous code execution.

The argument for banning is straightforward: Coding Agents introduce risks that are hard to quantify and harder to control. An agent could introduce subtle bugs, leak proprietary code to external APIs, or create licensing issues by generating code derived from copyrighted sources. For regulated industries — medical devices, finance, automotive — the compliance risk alone may seem to justify a ban.

The argument against banning is equally straightforward: it doesn’t work. When you ban a tool that makes developers more productive, you don’t eliminate the demand — you drive it underground. We’ve seen this firsthand (examples from our professional network, anonymized for confidentiality): shadow infrastructure emerges even in companies that aren’t primarily software-driven — research teams using agents to assemble analysis scripts, outside any sanctioned channels. In companies that ban Coding Agents, developers create personal API keys, run agents on their own machines, and operate outside version control and review processes. The code still gets written by agents — but now it’s invisible, unmonitored, and unreviewed.

Banning doesn’t eliminate risk. It eliminates visibility into the risk.

Option 2: Buy 💰

The middle path: adopt a commercial Coding Agent solution. Claude Code, GitHub Copilot Workspace, or similar vendor-managed products. Standardization, vendor support, and a clear chain of responsibility.

The advantages are real:

- Standardization: Everyone uses the same tool, configured the same way.

- Security: Vendors invest in safety features — content filtering, code scanning, audit logs.

- Support: When something goes wrong, you have a vendor to call.

- Compliance: Commercial tools come with baseline certifications (SOC 2, GDPR processing agreements) that are expensive to build yourself. These are necessary baselines, not comprehensive solutions — regulated industries face additional frameworks no commercial agent currently addresses.

The trade-offs are equally real:

- Cost: Per-seat or per-token pricing becomes a significant line item at scale.

- Limited customizability: You’re constrained to the vendor’s feature set. If your workflow doesn’t fit, you adapt to the tool.

- Vendor lock-in: Developers build muscle memory; CI/CD pipelines integrate with the vendor’s API. Switching becomes expensive.

- Data sovereignty: Code and prompts flow through the vendor’s infrastructure — a hard constraint for some organizations.

Option 3: Build 🔧

The ambitious path: invest in internal expertise and infrastructure to deploy, monitor, and customize open-source Coding Agents like Pi to your organization’s specific needs.

This is the highest-leverage option, but also the highest-investment one:

- Full control: You decide what the agent can do, what data it accesses, and how it’s sandboxed.

- Customization: Tailor the agent’s System Prompt, Tools, and Skills to your codebase and coding standards. Pi’s minimal architecture makes this straightforward — you’re extending, not working around.

- Data sovereignty: Code never leaves your infrastructure — assuming a local or open-source model, or a dedicated cloud instance with appropriate data processing agreements. If you build on Pi but call OpenAI’s API, the orchestration is local but the inference is not. This caveat matters for strict data residency.

- Monitoring: Build custom dashboards tracking agent usage, code quality, and productivity — data about how agents affect your organization, not vendor marketing.

- Internal knowledge: Your team develops deep expertise — not just how to use agents, but how to configure, extend, and troubleshoot them. This strategic capability compounds over time.

The costs are significant:

- Upfront investment: Setting up infrastructure, writing custom Skills, configuring Dev Containers, and training the team takes time and money.

- Ongoing maintenance: Open-source agents evolve. Skills need updating. Dev Container images need rebuilding. This is operational overhead a commercial vendor would absorb.

- Talent scarcity: This is the single biggest risk. People who can architect, deploy, and maintain Coding Agent infrastructure are rare — and in high demand. Before committing to Build, honestly assess whether you can attract and retain this expertise.

- Open-source lock-in: Building on Pi means committing to its architecture, skill format, and tool model. If its direction diverges from your needs, you face the same adapt-or-migrate decision as with a commercial vendor — except you also carry the maintenance burden if you fork.

A Decision Framework

Before scoring, you need data. Audit your current agent usage — you may be surprised by what you find. Then apply this weighted scoring model, plugging in your own weights:

| Criterion (weight) | Ban 🚫 | Buy 💰 | Build 🔧 |

|---|---|---|---|

| Data sovereignty (0.25) | 5 | 2 | 5 |

| Cost efficiency (0.20) | 2 (no tool cost, high opportunity cost) | 2 | 3 |

| Customizability (0.20) | 0 | 1 | 5 |

| Developer productivity (0.20) | 0 | 4 | 4 |

| Time to value (0.10) | 5 (instant) | 4 | 1 |

| Monitoring capability (0.05) | 0 | 3 | 5 |

| Weighted Score | 2.15 | 2.45 | 4.00 |

These scores assume an organization prioritizing data sovereignty and customizability — typical for DeepTech, public sector, and regulated industries. With these weights, Build wins. But change the weights and the answer changes: if time-to-value dominates, Buy may be right. We should be transparent — our analysis draws on a small number of real-world observations, not a comprehensive survey. The framework is a starting point, not a definitive answer.

Note: the most common state — having no policy at all — scores even worse than Ban. At least a ban is a deliberate decision. No policy means no visibility, no control, and no accountability.

Human Oversight: The Spectrum

Regardless of which path you choose, you’ll need to decide on the level of human oversight your organization requires. This isn’t binary — it’s a spectrum:

- Copy-paste only: Developers may use chat-based LLMs for suggestions, but must manually copy code into the editor. No agent execution. Maximum oversight, minimum productivity gain.

- Supervised execution: The agent can execute actions, but every action requires human approval before execution. High oversight, moderate productivity gain.

- Review-after execution: The agent executes autonomously, but all changes must be reviewed and approved before committing. Good balance of oversight and productivity.

- Autonomous with guardrails: The agent operates independently within defined boundaries (sandboxed, limited tool set), with periodic human review. Maximum productivity, requires mature monitoring.

The spectrum isn’t strictly linear: Level 2 can be slower than Level 1 if approval latency is high — the agent waits for human input between steps. Productivity gains accelerate most between Level 2 and Level 3, where the agent executes multiple steps autonomously between reviews. Most organizations should start at Level 2 or 3 and evolve toward Level 4 as they build confidence and monitoring.

The Leader’s Imperative

If software development is your biggest cost center and you don’t have clear guidelines for Coding Agents, the question isn’t whether you should act — it’s why you don’t already know what’s happening. Leaders who invest in understanding this technology — beyond the marketing — will make better decisions. Not at the implementation level, but enough to understand:

- What Coding Agents actually do in practice — not what marketing promises

- How they affect code quality and documentation — they generate clean code, but also subtle bugs and superficial docs

- What your developers are already doing — the shadow IT problem is real

- What monitoring looks like — agent usage, code quality, and productivity should be on your dashboard

Coding Agents are not a passing trend. They are a structural shift in how software is built — comparable to the shift from manual testing to CI/CD, or from on-premise to cloud. The organizations that adapt early, with clear strategy and informed leadership, will compound their advantage. The ones that ignore, ban, or blindly adopt will find themselves at a growing disadvantage.

The Cost of Inaction

The most expensive option isn’t Ban, Buy, or Build — it’s indecision. While you deliberate, your developers are using agents anyway — without oversight, without review, without your knowledge. This is precisely why Build is the recommended path for organizations that can afford it: it gives you the visibility and control that Ban eliminates and Buy partially provides.

The age of autonomous coding is here. The question is no longer whether to engage, but how.

This concludes our series on Coding Agents in Practice. From understanding what they are, to how they work under the hood, to running them safely, to making strategic decisions for your organization — we hope this series gives you the foundation to make informed choices about one of the most significant shifts in software development practice.